Opus 4.7 Drops Is Live, The Cyber Race Is On, Stanford Shows the Receipts

Opus 4.7 was released today. OpenAI and Anthropic have locked down cyber AI. Stanford closed the topic "AI has slowed down."

Hey! Welcome to the latest Creators’ AI Edition.

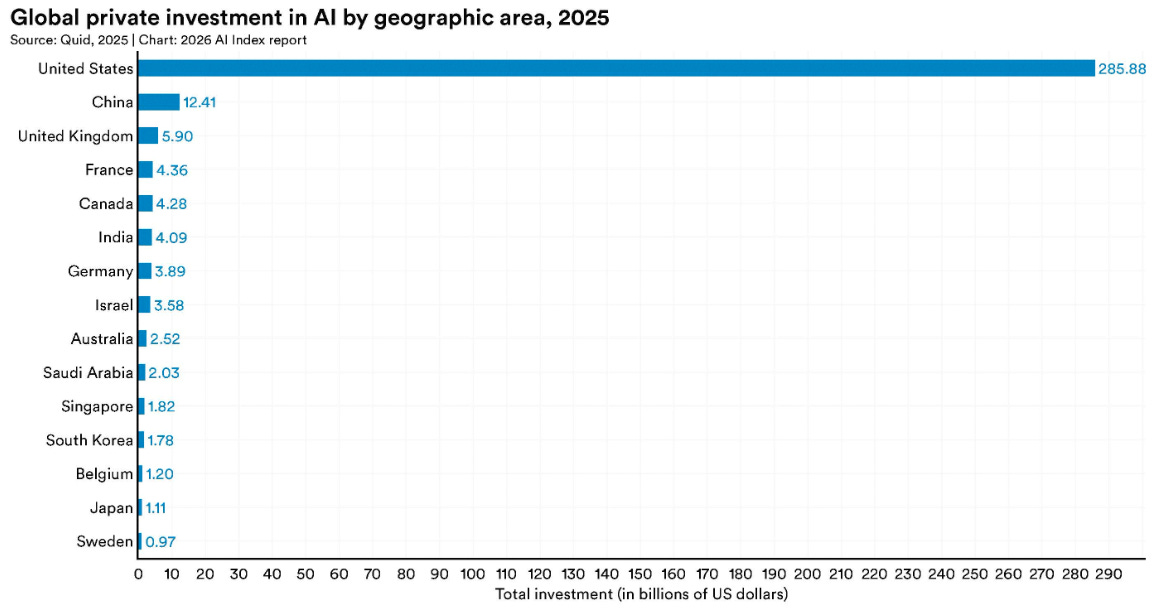

Anthropic shipped Claude Opus 4.7 this week — same price as 4.6, but with 3x sharper vision, stronger coding, and the first built-in cybersecurity safeguards. OpenAI fired back on Monday with GPT-5.4-Cyber, its own locked-down model for verified security professionals — and the Spud (GPT-5.5) launch window keeps slipping. Meanwhile, Stanford released its 2026 AI Index and the numbers don't lie: 53% global adoption in three years, SWE-bench coding scores jumping from 60% to near 100% in a single year, $581.7 billion in corporate AI investment. Today we have:

Featured Materials 🎟️

News of the week 🌍

Useful tools ⚒️

Weekly Guides 📕

AI Meme of the Week 🤡

AI Tweet of the Week 🐦

(Bonus) Materials 🎁

Keep your mailbox updated with practical knowledge & key news from the AI industry!

Stop losing contacts. Let AI remember everyone for you.

ConnectMachine is your private AI agent for professional networking. Describe who you're looking for in plain English — ConnectMachine finds them, surfaces the context of when and where you met, and helps you reconnect at exactly the right moment. No scattered business cards. No noisy social feeds. No lost opportunities slipping through the cracks.

Built for founders, executives, and operators who network with intention — not volume. From digital business cards with selective sharing to calendar-aware meeting scheduling — ConnectMachine handles your entire relationship layer, quietly and privately.

Featured Materials 🎟️

Claude Opus 4.7 Is Out — And It Brought a Design Tool That Spooked Adobe 🖥️

Claude Opus 4.7 is out — same price as 4.6, better at almost everything that matters. The two-month cadence holds: Opus 4.5 in November, 4.6 in February, 4.7 on April 16. On April 16, Anthropic shipped the upgrade.

What’s new:

13% lift on coding benchmarks — with particular gains on the hardest tasks. Users who’ve been handing off complex multi-session work report they can now do it with less supervision.

3.75 megapixel vision — up from 1.15 megapixels on Opus 4.6. That’s a 3x jump in visual capacity. Screenshots, diagrams, and dense documents now come through at actual fidelity, and computer use coordinates map 1:1 with real pixels.

New xhigh effort level — a setting between “high” and “max” that gives developers finer control over the reasoning-vs-latency tradeoff. Claude Code now defaults to xhigh for all plans.

/ultrareview in Claude Code — runs a dedicated review pass on your changes and flags what a careful human reviewer would catch before you commit.

Task budgets (beta) — developers get finer control over how Claude allocates reasoning on longer runs.

Cybersecurity safeguards — Opus 4.7 is the first publicly available Claude model with automated detection and blocking of prohibited cybersecurity uses. Anthropic is using this release to test guardrails before any broader Mythos-class rollout.

Pricing is unchanged: $5 input / $25 output per million tokens. Available now on claude.ai, the API, Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry.

One caveat worth knowing: Opus 4.7 regresses on Terminal-Bench 2.0 (69.4% vs GPT-5.4’s 75.1%) and softens slightly on BrowseComp vs 4.6. If those two workflows drive your production use, worth knowing.

The design tool story:

The model wasn't the only news. On April 14, The Information reported Anthropic is also launching an AI design tool that generates websites, landing pages, and presentations from plain-text prompts — no design skills required. Shares of Adobe, Figma, Wix, and GoDaddy closed in the red the same day. Anthropic has already partnered with Figma to convert AI-generated code into editable design files. Until now, Anthropic only built chat interfaces and developer tools. Gamma, Google Stitch, and Canva owned visual and creative workflows. That changes. As of publication, the design tool hasn’t officially dropped — watch Anthropic’s release notes this week.

Anthropic is shipping its most capable available model — at the same price as its previous one — while simultaneously building a product that replaces the starting point of design work. That’s not an incremental update. That’s a product strategy.

OpenAI Launches GPT-5.4-Cyber — The AI Cybersecurity Arms Race Is Now Official 🔐

OpenAI's answer to Project Glasswing just landed. GPT-5.4-Cyber is a fine-tuned variant of GPT-5.4 built exclusively for defensive cybersecurity — distributed only to verified security professionals through an expanded Trusted Access for Cyber (TAC) program. The announcement dropped April 14.

What GPT-5.4-Cyber can do that GPT-5.4 can’t:

Binary reverse engineering — analyze compiled software for malware, vulnerabilities, and security flaws without source code access. This is a capability that was previously too sensitive to give broadly.

Fewer refusals on dual-use queries — security professionals kept hitting guardrails when asking legitimate penetration testing questions. GPT-5.4-Cyber removes that friction for vetted users.

Advanced defensive workflows — vulnerability prioritization, impact analysis, repair proposal generation.

OpenAI’s Codex Security has already contributed to 3,000+ critical and high-severity vulnerability fixes across open-source projects since launch.

How to get access:

Two paths. Individual security professionals can verify at chatgpt.com/cyber. Enterprise teams request access through an OpenAI representative. The model deploys gradually — vetted vendors first, broader rollout to come.

Why both labs are doing this at the same time:

Anthropic’s Claude Mythos found thousands of zero-day vulnerabilities autonomously — including a 17-year-old remote code execution flaw in FreeBSD — which spooked banks, governments, and enterprise security teams. Both OpenAI and Anthropic are now racing to establish the “responsible” model for deploying powerful cybersecurity AI: lock down the most dangerous capabilities, but give verified defenders a head start over attackers.

Two of the most capable AI companies in the world are simultaneously building models too dangerous to release publicly — and creating exclusive access tiers for people who need to use them anyway. That’s not safety theater. That’s a real governance problem being handled in real time.

Source: OpenAI Blog | Axios | The Hacker News

Stanford’s 2026 AI Index: The Annual Report Card Just Dropped 📊

SWE-bench — the benchmark where AI models fix real GitHub issues — went from 60% to near 100% in a single year. Generative AI hit 53% global adoption faster than the PC or the internet. Stanford's 2026 AI Index dropped this week, and it's the most important data document in AI right now.

The headline findings:

Humanity’s Last Exam scores jumped 30 percentage points in a single year — from 8.8% (GPT-4 era) to 38.3%. Models as of April 2026 (Claude Opus 4.6, Gemini 3.1 Pro) now top 50%.

The US-China model performance gap has effectively closed. Anthropic leads Arena Elo (1,503), followed by xAI (1,495), Google (1,494), OpenAI (1,481), then Alibaba (1,449) and DeepSeek (1,424). Six percentage points separate first from sixth.

$581.7 billion in global corporate AI investment in 2025 — a 130% jump from the previous year. Generative AI accounted for nearly half, growing 200%+.

Organizational adoption reached 88%. 4 in 5 university students use generative AI for coursework. Only 6% of teachers say their school’s AI policies are actually clear.

The “jagged frontier” problem persists. Models that solve PhD-level physics problems still struggle with clock-reading tasks. Robots succeed at only 12% of household tasks.

Transparency is falling. The Foundation Model Transparency Index dropped from 58 to 40 points average. The most capable models disclose the least.

The context that matters for creators:

Estimated U.S. consumer surplus from generative AI reached $172 billion annually by early 2026. The median value per user tripled in a single year — while the tools remain mostly free. Marketing output productivity is up 50% in organizations that’ve integrated AI into creative workflows. The tools are here. The gap between users and non-users is widening every month.

AI didn’t plateau. It didn’t hit a wall. SWE-bench went from 60% to 100% in one year. That pace, if it continues, doesn’t give anyone a comfortable window to “wait and see.”

Source: Stanford HAI | MIT Technology Review | IEEE Spectrum

News of the week 🌍

GPT-5.5 “Spud” April 14 Rumor Busted — New Window: April 21 to May 25 🥔 — The AI community convinced itself GPT-5.5 would drop April 14. It didn’t. The rumor came from an unverifiable “insider” post with no primary sources — OpenAI never corroborated it. What remains confirmed: pretraining wrapped March 24 at the Stargate data center in Abilene, Texas. Altman said “a few weeks.” Brockman called it “two years of research” with a “big model feel.” Polymarket still prices 78% probability of release by April 30. The new realistic window is April 21 to May 25. For context: while the community was waiting on Spud, Anthropic shipped Opus 4.7. The model race is too close to time bets around a single player.

Cerebras Targets IPO This Week — OpenAI Chip Deal Now $20B+ 💾 — Cerebras Systems, the AI chip startup that makes wafer-scale processors (56x larger than Nvidia’s H100), is targeting a Nasdaq IPO as soon as this week at a $35B+ valuation — a 60% premium over its $22B February valuation. The deal anchor: OpenAI agreed to pay Cerebras $20B+ for server chips, potentially receiving Cerebras equity in return. For context, Cerebras chips are up to 20x faster than Nvidia GPUs on AI inference workloads. This is the first serious public test of whether a non-Nvidia AI chip company can command frontier valuations.

Snap Cuts 1,000 Jobs, CEO Explicitly Cites AI 📉 — Snap laid off approximately 1,000 employees — 16% of its global workforce — with CEO Evan Spiegel stating in a company-wide memo that AI is replacing repetitive work. The cuts will save over $500 million by H2 2026. Affected staff receive four-month severance. This is one of the clearest public examples of a major consumer tech company directly attributing headcount reduction to AI capability — not to restructuring, not to market conditions. Read alongside Block’s 40% cut in February.

EU AI Act Enforcement: Four Months Away — and Europe Is Already Blinking ⚖️ — The EU AI Act's high-risk AI enforcement deadline lands August 2, 2026. This week Amnesty International and 127 civil society organizations published a joint warning: the European Commission's latest "simplification" proposals quietly weaken both the AI Act and GDPR under pressure from Big Tech lobbying. For any team selling to European clients or operating EU infrastructure, the window to audit AI tools is now under four months.

OpenAI Acquires TBPN — Its First Ever Media Company 📺 — OpenAI announced on April 8 that it acquired TBPN, the Technology Business Programming Network — a daily live tech and business show that's become a cult phenomenon in Silicon Valley. First media acquisition in OpenAI's history. Deal terms undisclosed. The move signals OpenAI is building a content distribution layer, not just a model company.

Useful tools ⚒️

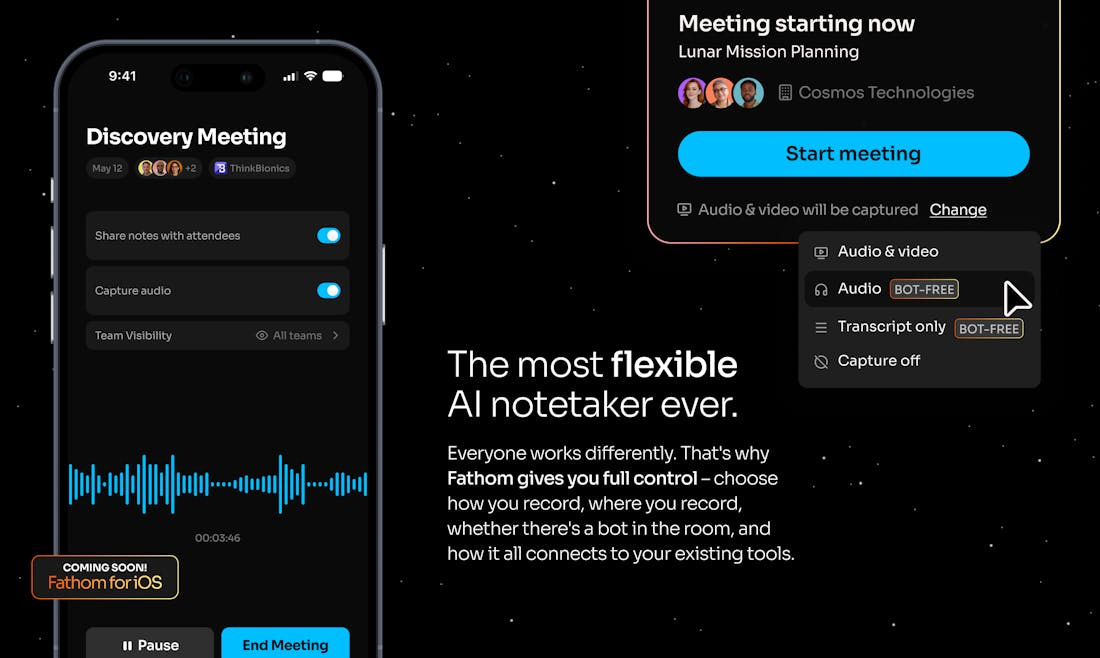

⭐ Fathom 3.0 — AI meeting notetaker that now reads your screen. Version 3.0 adds fact-checking via Claude and ChatGPT Vision, so it can analyze shared documents, decks and screenshots during the call — not just transcribe audio. #1 on Product Hunt this week. Free plan available.

Figma for Agents — Connect AI agents directly to your design system. Agents can now read components, tokens, and guidelines from Figma and build UI that matches your brand without re-briefing every session. Launched this week — and lands right as Anthropic announced their own AI design tool.

Claude Code Routines — Put Claude Code tasks on autopilot with reusable smart routines. Define a workflow once — lint, test, deploy, summarize changes — and trigger it on schedule or on command. No re-prompting every session. Launched this week alongside the Opus 4.7 rollout.

Krisp Accent Converter for YouTube — Real-time accent conversion for YouTube creators: normalizes your speech so every viewer clearly understands you, regardless of accent or background noise. Removes one of the biggest drop-off reasons for non-native English creators.

Luma Agents — Creative AI agents that plan, iterate, and refine with full visual context. Unlike text-only agent loops, Luma agents see and evaluate what they’re generating — images, video sequences, compositions — and adjust without you re-prompting. Built for video and visual production workflows.

Share this post with friends, especially those interested in AI!

Weekly Guides 📕

Claude Opus 4.7: Full Benchmark Breakdown and Migration Guide — 14 benchmark comparisons across every relevant task, the xhigh effort level explained, the new tokenizer’s impact on costs, and a complete migration checklist from Opus 4.6. Start here before updating any production pipelines.

Stanford 2026 AI Index: 12 Key Takeaways — The condensed version of the 400-page report. 12 data points that actually matter, with charts. The SWE-bench chart alone is worth bookmarking.

OpenAI GPT-5.4-Cyber: How to Apply for Trusted Access — OpenAI’s official page explaining the TAC program tiers, what GPT-5.4-Cyber can do vs. standard GPT-5.4, and both paths to access (individual and enterprise). If you work in security, read this now.

Intent by Augment Code: Official Docs and Getting Started — How to set up your first Space, how the Coordinator + Specialist agent model works, and when spec-driven development makes sense over a single Claude Code session.

How Claude Code Can Be Your AI Teammate — Our full deep dive on using Claude Code in production, Our foundational deep dive on using Claude Code in production. With Opus 4.7 now defaulting to high effort and adding /ultrareview, the basics matter more than ever. If you’re new to agentic coding, start here before anything else.

AI Meme of the Week 🤡

AI Tweet of the Week 🐦

(Bonus) Materials 🎁

One Man Used Claude and NotebookLM to Save His Mother's Life — Pratik Desai built an AI workflow to manage his mother's Stage 4 cancer care — catching a misdiagnosis and coordinating three critical interventions. The most concrete case study this week of what AI in healthcare actually means.

OpenAI's Vision for the AI Economy: Robot Taxes and a 4-Day Work Week — Sam Altman's 13-page policy paper published this week: a Public Wealth Fund giving every American a stake in AI companies, subsidized 32-hour workweek, taxes on automated labor. Worth reading regardless of whether you agree.

Snap's AI Layoffs: Spiegel's Full Statement — Evan Spiegel’s internal memo in full. Clear, direct, no hedging. Read alongside the Block/Dorsey story from February — two different companies, same underlying argument, very different public perception.

Cerebras IPO: What You Need to Know Before It Prices — Full breakdown of the Cerebras story: the wafer-scale chip architecture, the OpenAI $20B+ deal, the G42 concentration risk, and what the April IPO means for the non-Nvidia AI chip thesis.

If you missed our previous updates, don’t worry, here they are:

Meta Goes Closed Source, Mythos Gets Real, Gemma 4 Beats Llama | Weekly Digest