GPT-5.5 Doubles the Price, Google Goes Full Agent, DeepSeek V4 Resets the Floor | Weekly Digest

GPT-5.5 at $30/M output, Vertex AI becomes Gemini Enterprise & DeepSeek V4 100x cheaper

Hey! Welcome to the latest Creators’ AI Edition.

GPT-5.5 shipped on April 23 and doubled the API price in the same breath — $5 in, $30 out per million tokens. Vertex AI is dead: Google rebranded it to Gemini Enterprise Agent Platform at Cloud Next and unveiled two new TPU generations built for agent workloads. Then DeepSeek V4 dropped on April 24, matched frontier benchmarks, and undercut GPT-5.5 on output by more than 100x. Today we have:

Featured Materials 🎟️

News of the week 🌍

Useful tools ⚒️

Weekly Guides 📕

AI Meme of the Week 🤡

AI Tweet of the Week 🐦

(Bonus) Materials 🎁

Keep your mailbox updated with practical knowledge & key news from the AI industry!

Featured Materials 🎟️

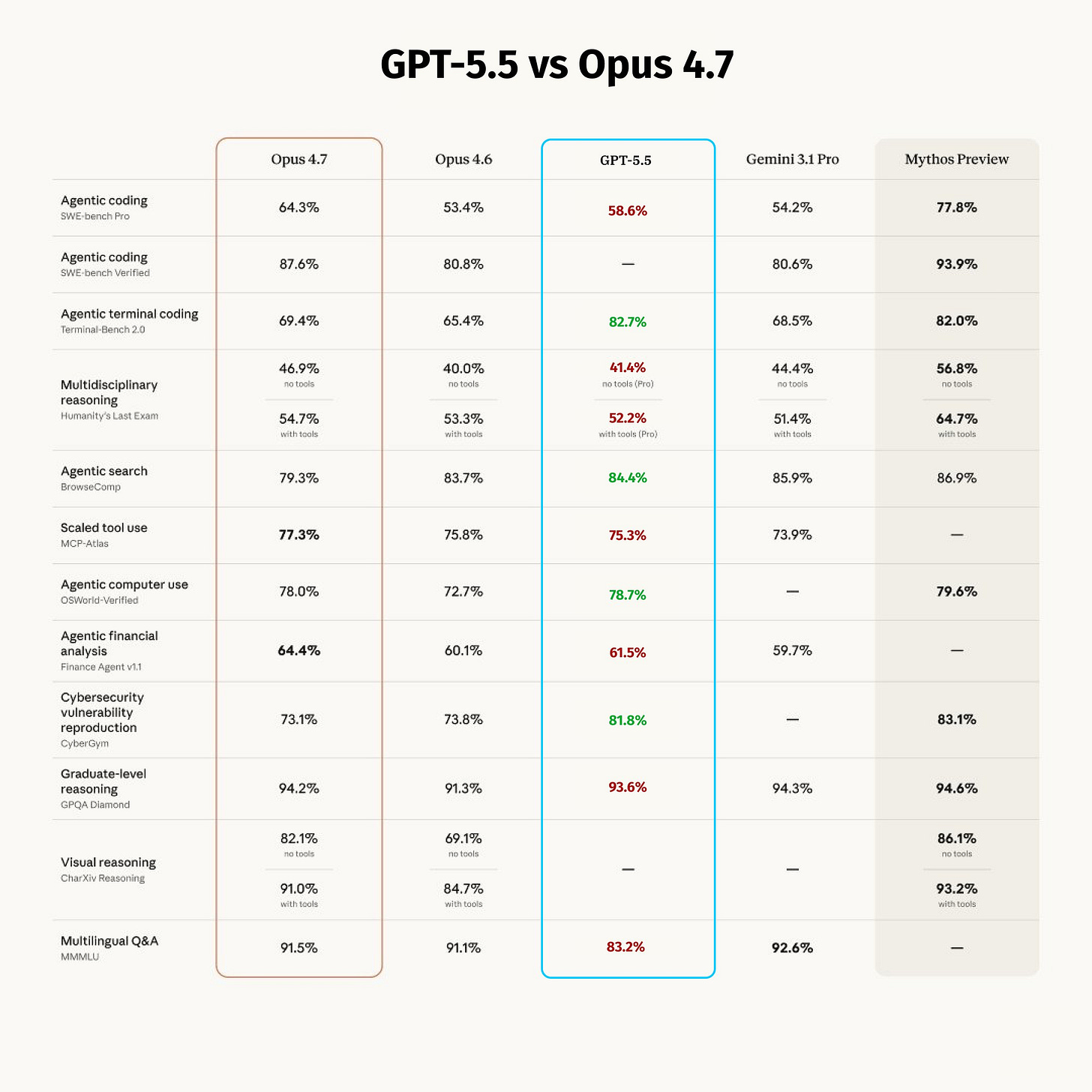

GPT-5.5 Is Smart, Weird, and Twice the Price 💸

GPT-5.5 is out — $5 per million input, $30 per million output. That’s exactly double GPT-5.4 and 20% more than Claude Opus 4.7. OpenAI released it on April 23 to Plus, Pro, Business, and Enterprise users in ChatGPT and Codex. It’s also the first fully retrained base model since GPT-4.5 — every GPT-5.x release between them was a post-training iteration on the same base. GPT-5.5 is not. The benchmarks tell part of the story. The invoice tells the rest.

What actually shipped:

Terminal-Bench 2.0: 82.7% — up from 74.2% on GPT-5.4, new SOTA among all models

SWE-Bench Pro: 58.6% — Opus 4.7 still leads at 64.3%

1M context window — first OpenAI model to match Gemini 2.5 Pro and DeepSeek V4

Agent-first architecture — OpenAI describes it as a system that “takes a sequence of actions, uses tools, checks its own work, and keeps going” without re-prompting

The pricing story:

GPT-5.4 was the cheapest frontier model by a wide margin. That was the point. Sam Altman framed the hike as reflecting “the true cost of frontier compute.” OpenAI says effective cost increase is closer to 20% once token efficiency is factored in — GPT-5.5 is more efficient per task than 5.4. Whether that math holds for your workload is for you to verify.

Source: OpenAI | OpenAI Community | LLM Stats

Vertex AI Is Dead, Long Live Gemini Enterprise 🏢

Vertex AI no longer exists. At Google Cloud Next on April 22 in Las Vegas, Google rebranded it to Gemini Enterprise Agent Platform and paired the launch with two new TPU generations. The rebrand is bigger than the silicon.

The TPUs:

TPU 8t (training) — 9,600 chips per superpod, near-linear scaling to 1 million chips across data centers, 3x the compute of the previous generation

TPU 8i (inference) — 3x the on-chip SRAM, 80% better performance-per-dollar, purpose-built for low-latency agentic workloads and MoE models like DeepSeek V4

The agent platform:

Gemini Enterprise ships with a built-in orchestration layer, Agent Studio (low-code builder), persistent Memory Bank, and a governance layer. The key new protocol: A2A (Agent-to-Agent) v1.0, now in production at 150 organizations — it lets agents built on different frameworks (LangGraph, CrewAI, LlamaIndex, AutoGen) hand off tasks to one another without understanding each other’s internal architecture. A Salesforce agent can call a Google agent, which calls a ServiceNow agent, all via A2A.

Over 40 launch partners including Box, Workday, Salesforce, and ServiceNow committed on day one. Google also announced a $750M fund for agentic AI startups with dedicated allocations for Europe, Japan, and India.

The Anthropic number buried in the keynote:

Anthropic crossed $30B in annualized TPU revenue on Google Cloud in April 2026, with 80% from enterprise API usage. Every TPU performance gain announced this week directly reduces Anthropic’s inference cost structure.

Vertex AI was a model-serving tool. Gemini Enterprise is a bid to be the operating system for agent economies. Microsoft now has 18 months to answer.

Source: Google Cloud | Google Blog | Reuters

DeepSeek V4 Drops: Near-Frontier Quality, Fraction of the Price🧨

DeepSeek V4 landed on April 24 in two variants — V4-Pro (1.6T total / 49B active) and V4-Flash (284B / 13B active), both with 1M context. V4-Pro scored 3,206 on Codeforces, ahead of GPT-5.4’s 3,168. On SWE-Verified: 80.6%. Then they published the price sheet.

The numbers that reset the floor:

V4-Flash: $0.14 input / $0.28 output per million tokens

V4-Pro: $1.74 input / $3.48 output per million tokens

GPT-5.5 for comparison: $5.00 / $30.00

Flash output is more than 100x cheaper than GPT-5.5 while competitive on reasoning and code. Pro stays nearly 9x cheaper than GPT-5.5 at frontier-adjacent quality.

The license and the catch:

Weights released under a Modified MIT License — commercial use and redistribution allowed, but a non-compete clause prevents using them to train competing frontier models. The hosted API runs on DeepSeek’s own H800/H100 fleet in China. Huawei simultaneously announced that its Ascend 950 chips will fully support V4 out of the box — the first frontier-class model deployable at scale on Chinese domestic hardware without US-manufactured GPUs.

Every frontier lab spent 2025 arguing scaling laws still hold. DeepSeek spent 2025 proving architecture and data curation beat raw FLOPs. V4 is the receipt.

Source: DeepSeek | Hugging Face

News of the week 🌍

SpaceX Gets an Option to Buy Cursor for $60B 🚀 — SpaceX struck a deal with Cursor to build a next-generation coding and knowledge-work AI, with an option to acquire the startup for $60B later this year or pay $10B for the partnership instead. The signal is obvious: coding agents are no longer “developer productivity tools.” They’re becoming strategic infrastructure.

Claude Opus 4.7 Lands in Amazon Bedrock ☁️ — Anthropic made Claude Opus 4.7 available in Amazon Bedrock on April 20, roughly three weeks after the API launch. Bedrock also added prompt caching with up to 90% discount on repeated context — notably cheaper than Anthropic’s direct equivalent for high-volume repeated prompts.

Kimi K2.6 Launches Open-Source 🧨 — Aimed Directly at GPT-5.4Moonshot AI released Kimi K2.6 with a pointed message: this is a frontier coding and reasoning model under Modified MIT. It’s a 1T-parameter MoE model with 32B active parameters, 256K context, and native multimodal input. Pricing: $0.95 input / $4.00 output per million tokens — half the price of GPT-5.4 at launch. Combined with DeepSeek V4 dropping on April 24, this week produced two significant Chinese open-weight releases in a single four-day window.

Cerebras Files S-1 — Nasdaq IPO Targeting $22–25B 📈 — Cerebras Systems filed its S-1, targeting the CBRS ticker on Nasdaq at a $22–25B valuation. The filing confirms a multi-year, $20B+ compute deal with OpenAI — the company’s largest single customer and the deal that flipped its revenue concentration story from 87% UAE to a more diversifiable base. Revenue hit $510M in 2025, up 76% year-over-year. Roadshow begins early May; mid-May listing window.

Perplexity Personal Computer — general availability this week 🖥️ — The Mac app that ingests your local files, emails, and calendar and answers across all of them in a single retrieval pass. Free during public beta; Pro subscription required at GA.

Sam Altman publishes AI industrial policy paper 📄 — 13-page document proposing a Public Wealth Fund giving every American a stake in AI companies, a subsidized 32-hour workweek, and taxes on automated labor. Whether you agree or not, this is the framing OpenAI is taking into Washington.

Useful tools ⚒️

⭐ Dune — A physical 3-button Mac keypad that reads which app is in the foreground and automatically changes what its keys do in real-time. In GitHub: raise a PR, approve/reject. In your meeting app: join, toggle mic, control camera. Triggers AI agents with one tap. #1 on Product Hunt this week with 558 upvotes. $99 one-time, macOS only.

Pegasus 1.5 by TwelveLabs — AI model that transforms raw video into structured, timestamped metadata: scenes, objects, actions, dialogue — all indexed and searchable. Drop a 2-hour recording in, get a queryable knowledge base out. For anyone building on top of video content. 200 upvotes at launch today. Free tier available.

Claude Desktop Buddy — Bring Claude into the physical world via maker hardware. Connects Claude’s capabilities to physical inputs and outputs — buttons, LEDs, sensors. #2 on Product Hunt this week with 387 upvotes. Open-source, free.

Subspace — All your coding agents in one app with persistent context. Multi-agent coordination, sandboxed runs, Git workflows, parallel task handling across local and cloud setups. Free tier available.

The New Waydev — Measure the full AI SDLC from token to production. Tracks what your AI coding agents are actually shipping, where the bottlenecks are, and how token spend maps to output quality. #3 on Product Hunt this week. Free trial available.

Share this post with friends, especially those interested in AI!

Weekly Guides 📕

Introducing GPT-5.5 — Official OpenAI Announcement — The full release post: benchmark table, pricing rationale, Codex integration details, and the “new class of intelligence” framing. The SWE-Bench Pro vs Opus 4.7 comparison at the bottom is the most honest part.

Google TPU 8t and 8i Technical Deep Dive — Google’s own breakdown of the chip architecture, Virgo Network topology, and Boardfly interconnect. If you’re building on Google Cloud or tracking Anthropic’s inference cost trajectory, start here.

How to Use the DeepSeek V4 API: Full Migration Guide — Published today alongside the launch. OpenAI-compatible endpoint, one base-URL swap from GPT-5.5, Python and Node examples, thinking-mode math, and the July 24 deprecation deadline for old model IDs. If you're evaluating V4 for production, start here.

DeepSeek V4 — Full Breakdown by Simon Willison — The clearest technical summary of V4-Pro and V4-Flash: architecture, pricing math, Modified MIT license terms, and honest benchmark context. Written same day as launch.

Gemini Enterprise Agent Platform — What Actually Changed from Vertex AI — The New Stack’s breakdown of what’s new vs. rebranded, A2A protocol v1.0 explained, and where existing Vertex AI pipelines stand.

AI Meme of the Week 🤡

AI Tweet of the Week 🐦

Bonus Materials 🎁

Cerebras S-1 SEC Filing — Full Document — The full prospectus filed April 17. The OpenAI deal structure starts on page 47. The UAE revenue concentration risk is more nuanced than the headlines suggest.

GPT-5.5 vs Opus 4.7 — Full Benchmark Comparison — Honest cross-benchmark breakdown. GPT-5.5 wins on Terminal-Bench and long-horizon planning. Opus 4.7 wins on SWE-Bench Pro, MCP-Atlas, multilingual, and agentic finance. Pick by workload, not by headline.

Cursor Is Raising $2B at a $50B Valuation — The round is already oversubscribed. $2B ARR in February, $6B projected by year-end. Nvidia joining as strategic investor alongside a16z and Thrive. Six months ago the valuation was $29B. The AI coding market is compressing timelines that used to take a decade into a single fiscal year.

The MCP Security Problem Just Got Real — OX Security found critical remote-code-execution issues around MCP server handling, with reports saying major SDKs and a large number of MCP instances may be affected. Great Bonus pick because CAI audience already cares about MCP, agents, and “what can go wrong when your AI gets tools.”

If you missed our previous updates, don’t worry, here they are:

Opus 4.7 Is Live, Cyber Arms Race Official, Stanford Shows the Receipts | Weekly Digest

![DeepSeek V4 vs Claude 4.5 vs GPT-5.2: Complete AI Model Comparison [2026] DeepSeek V4 vs Claude 4.5 vs GPT-5.2: Complete AI Model Comparison [2026]](https://substackcdn.com/image/fetch/$s_!4F8m!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F96afbca6-406d-427b-926b-8c16e7889512_2400x1350.png)