Google’s Banana, AI2 for Science | Weekly Edition

PLUS HOT AI Tools & Tutorials

Hey there! Welcome to your latest Creators’ AI Edition

This week, Google drops its viral “banana” image model, Claude lands in Chrome, and Google Vids gets a serious AI upgrade. OpenAI adds image inputs to Codex, Translate gets Gemini-powered conversations, and AI2 launches Asta to speed up science. Plus, Anthropic reveals how educators really use Claude, and we’ve got fresh tools, guides, and bonus reads to keep you ahead.

Let’s dive in!

Featured Materials 🎟️

News of the week 🌍

Useful tools ⚒️

Weekly Guides 📕

AI Meme of the Week 🤡

AI Tweet of the Week 🐦

(Bonus) Materials 🎁

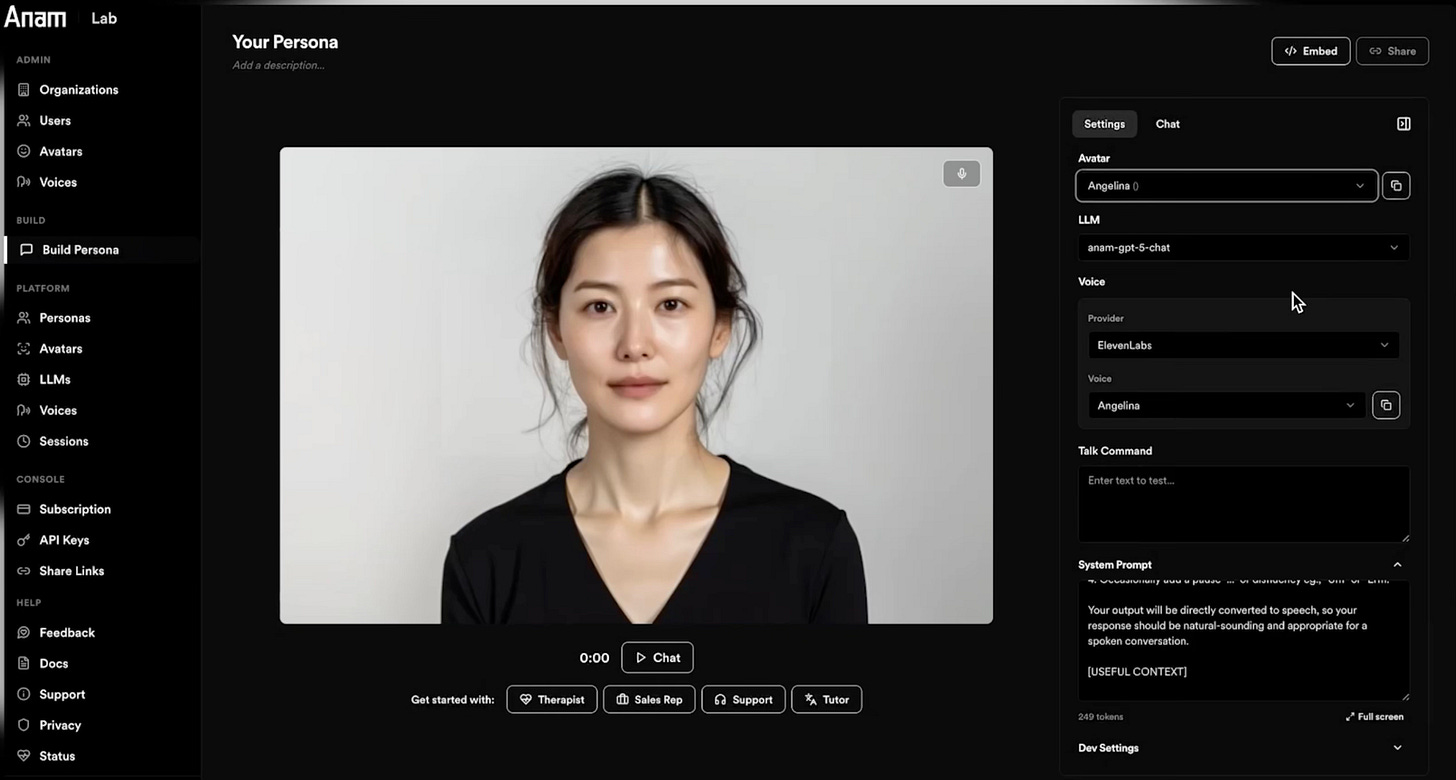

Give Your App a Human Face in Minutes

Spin up an AI persona in minutes with Anam Lab. Choose a face, voice, and brain, then drop it into your app with a few lines of code. Anam is putting a face on the internet, making digital interactions feel human, instant, and alive.

Featured Materials 🎟️

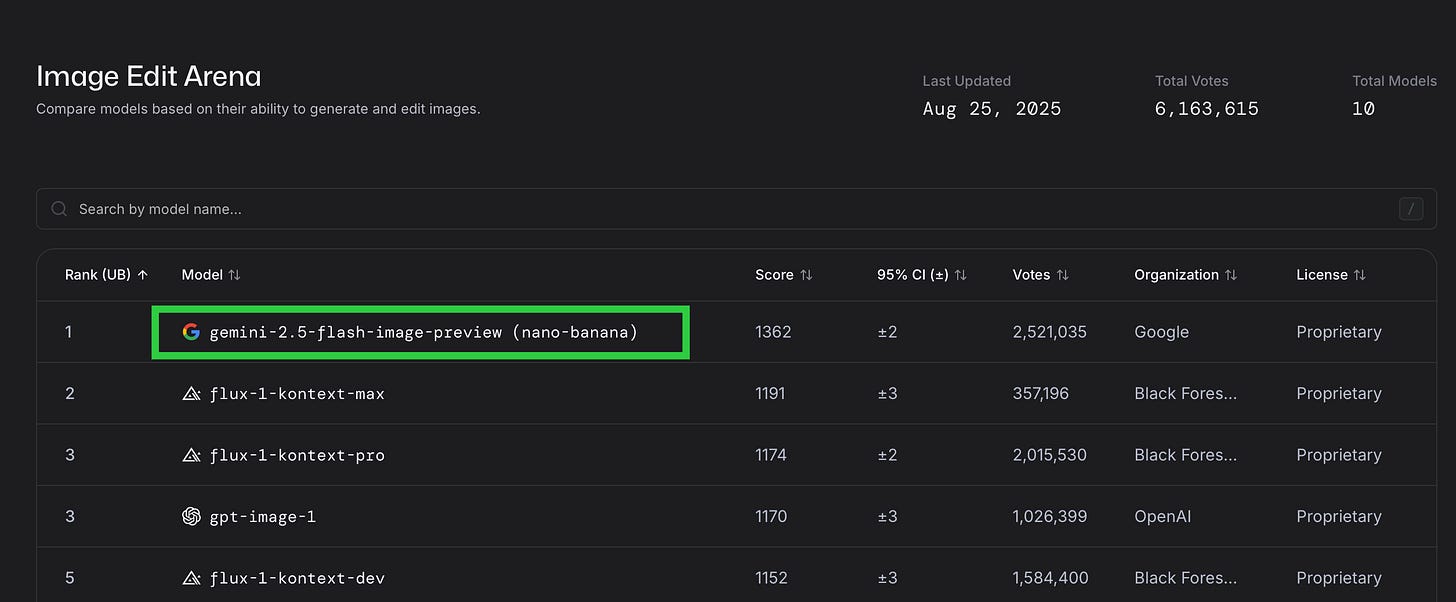

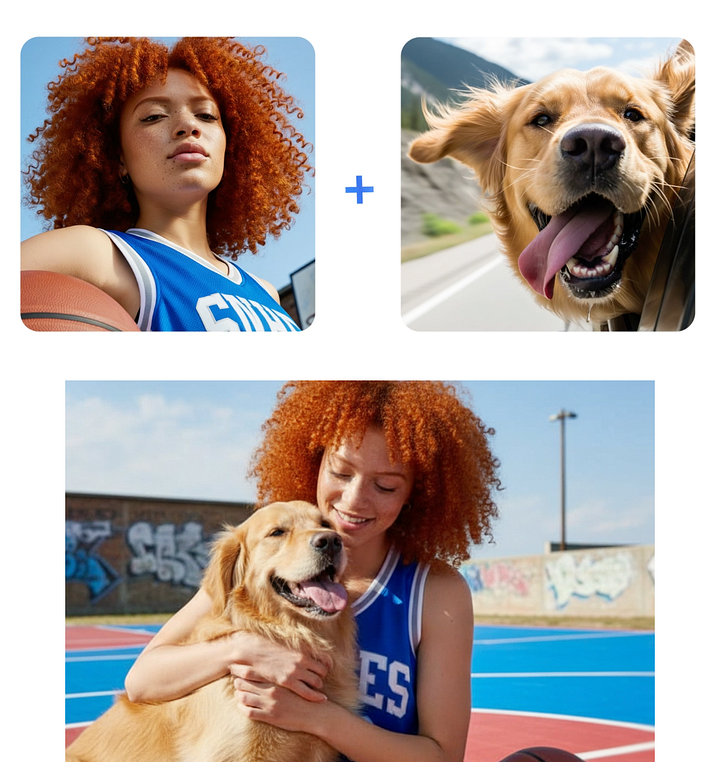

Banana By Google

Google has unveiled Gemini Flash 2.5 Image, the model that became a viral sensation under the nickname “nano banana” during testing. Now officially released, it has already topped the LM Arena Image Edit leaderboard, pulling far ahead of competitors such as Flux-Kontext. Flash 2.5 Image introduces precise, multi-step editing that keeps character details intact even as users apply multiple rounds of changes. It can blend images, mix artistic styles, and adjust entire scenes through simple natural language prompts, all while using multimodal reasoning to make smart choices about context, like inserting plants that actually belong in a given setting. Available in Google AI Studio and through API at $0.039 per image, the model undercuts rivals including OpenAI’s gpt-image and BFL’s Flux-Kontext. While it will not replace professional Photoshop workflows just yet, its ability to maintain likeness and consistency suggests a creative shift is coming, and could open the door to a new wave of viral, Ghibli-style editing apps.

Some examples:

Grok Code

xAI has launched Grok Code Fast 1, a fast and affordable reasoning model built for agentic coding, delivering up to 6x faster inference than peers and strong results across major programming languages. Free for a limited time on platforms like GitHub Copilot and Cursor, it’s priced at $0.20 per million input tokens and $1.50 per million output tokens. Scoring 70.8% on SWE-Bench-Verified, it’s designed as a practical daily driver for developers, with a multimodal, extended-context version already in the works.

Claude for Chrome

Anthropic has launched the research preview of Claude for Chrome. Functioning much like a Chrome extension, it opens in a sidebar, receives the user’s prompt, and then takes control of the active tab to carry out the requested work. Early impressions suggest it could become a very practical way to bring agentic workflows directly into the browser.

News of the week 🌍

Gen AI in Google Vids

Google is giving Google Vids a major boost, adding new AI tools that make video creation faster, smarter, and more accessible. Teams can now turn a single photo into an eight-second animated clip with sound using Veo 3, while AI avatars let anyone deliver polished scripts for trainings, onboarding, or presentations without the need for recording. Editing is becoming simpler with automatic transcript trimming that removes filler words and pauses in just a few clicks, and upcoming features like noise cancellation and customizable backgrounds will make videos even more professional. To help users get started, Google is launching a “Vids on Vids” learning series that walks through planning, recording, and editing with AI assistance. Already embraced by organizations such as Mercer International and Fullstory, Vids is proving its ability to cut production time from weeks to hours, showing how AI is reshaping the way companies tell their stories.

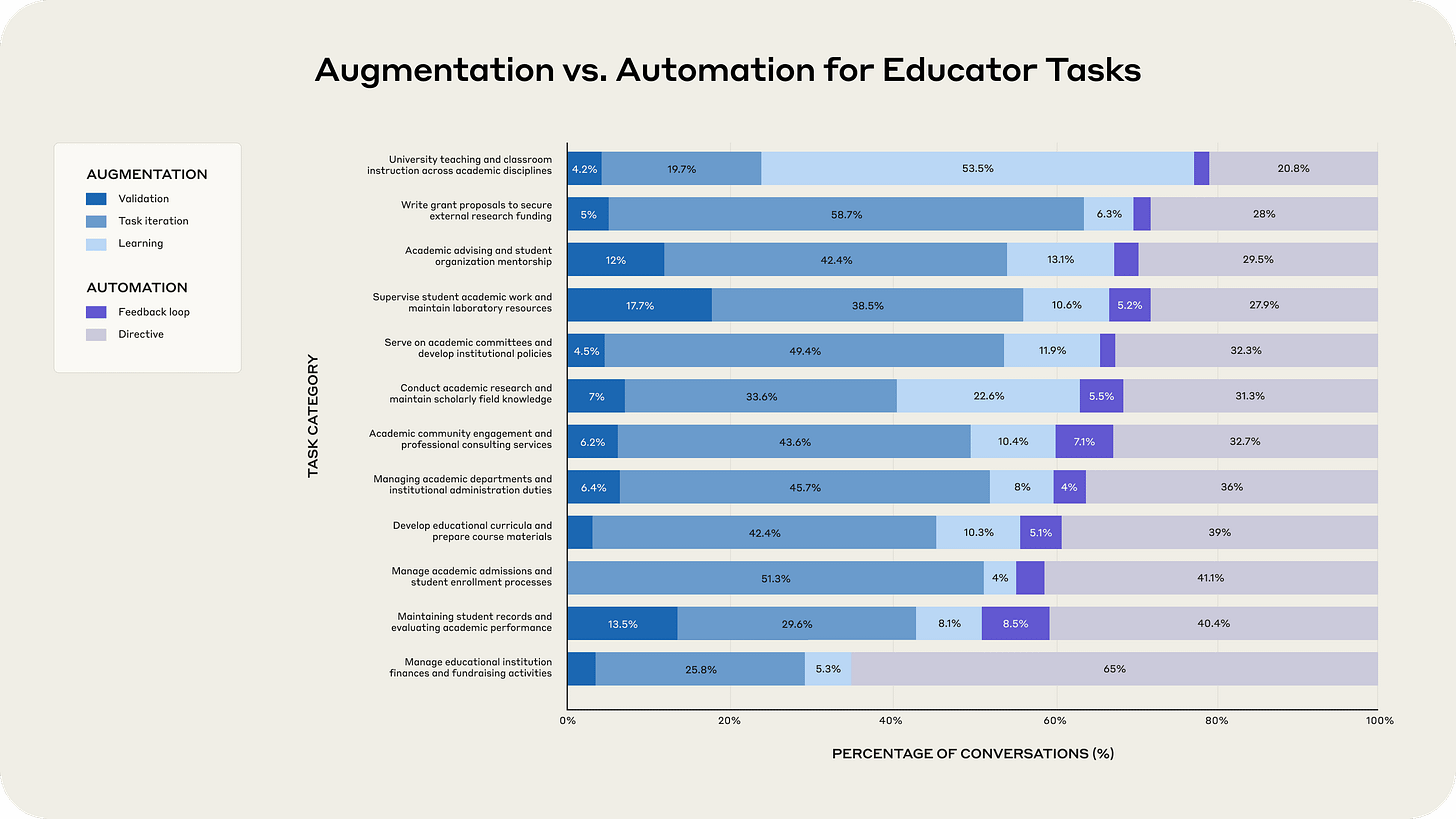

Anthropic Education Report

Anthropic analyzed over 74,000 educator conversations on Claude, revealing that professors most often use AI for curriculum design, followed by research support and some evaluation of student work. Many also experiment with Artifacts, creating tools like interactive labs, grading rubrics, and dashboards. Administrative tasks are frequently automated, while teaching and advising see less AI involvement. Grading emerged as the most polarizing use case, with heavy reliance in nearly half of assessment conversations despite being viewed as one of AI’s weaker skills. The findings show educators are embracing AI unevenly, with adoption patterns differing greatly from one classroom to another.

Image Inputs in Codex

OpenAI has rolled out a major update to Codex, bringing image inputs to the Codex CLI along with a range of new features that make it a stronger coding partner. Developers can now upgrade to version 0.24 to access the improvements, which include a new IDE extension, seamless movement of tasks between local and cloud environments, built-in code reviews in GitHub, and an overhauled CLI experience. All of these capabilities are powered by GPT-5 and available through existing ChatGPT plans.

New AI Translation in Google

Google has introduced new AI-powered features in Google Translate, using Gemini models to make real-time conversations and language practice easier. Users can now hold back-and-forth live conversations in over 70 languageswith audio and on-screen translations that adapt to pauses, accents, and noise, rolling out first in the U.S., India, and Mexico. A new language practice feature creates personalized listening and speaking sessions tailored to skill level and goals, starting with English–Spanish/French/Portuguese learners. These upgrades build on Translate’s 1 trillion words translated each month and push it beyond basic translation toward more natural conversation and language learning.

AI Agents x Science

AI2 has launched Asta, a new ecosystem built to accelerate science with trustworthy agentic AI. It combines three parts: research agents that help scientists review literature, analyze data, and plan experiments; AstaBench, a rigorous benchmarking suite for evaluating AI agents on real scientific tasks; and Asta resources, a collection of open-source agents, scientific models, and tools like the Semantic Scholar API. Designed for transparency and reproducibility, Asta aims to augment rather than replace researchers, offering reliable AI collaborators while setting clear standards for the field. With everything released as open-source, AI2 invites the global community to help shape the future of scientific AI.

Useful tools ⚒️

TraceRoot.AI - Fix bugs faster with open source, AI native observability

Command A Reasoning - Enterprise-grade control for AI agents

Tokyo - Tracking AI usage and cost by customer

AG2 - Instantly create & deploy MCP servers from any API spec

Codalogy AI - Visualize Any Codebase Instantly

Understand any code architecture in minutes. Codalogy analyzes your codebase, breaks it into clear components, and maps functionality & dependencies—so you can skip the digging and simply explore your code visually, coffee in hand.

Weekly Guides 📕

How to build a Search Agent with Parallel AI’s API

Refactoring a Next.js & Tailwind app with Cursor

How to Master AI Agents in 2025 (Full Guide)

Generative AI Roadmap 2025: Beginner to Pro Guide

AI Meme of the Week 🤡

AI Tweet of the Week 🐦

Do you miss o3?

(Bonus) Materials 🎁

How Anthropic is Shaping AI’s Role in Learning

How much energy does Google’s AI use

Six Facts about the Recent Employment Effects of Artificial Intelligence

The Context Window Problem: Scaling Agents Beyond Token Limits

If you missed our previous updates, don’t worry, here they are: