AI Safety Summit, Google Invests $2B In Anthropic, GPT-4 Beaten By Phind

PLUS HOT AI Tools & Tutorials

👋 Hey, I’m Daniil and welcome to a ✨ news edition ✨ of Creators’ AI. By subscribing, you directly support Creators' AI's mission to deliver top AI insights & practical knowledge without ads or clutter. Your subscription allows us to grow our dedicated team and curate the most important AI Tools, Stories, and Tutorials in one place. - Daniil

We are launching a community for active AI creators, makers & founders.

If you recently launched or planning to launch your AI Product, this Community can help a lot with the following:

Marketing for your AI Product

Product Hunt Launch Insights & Support

Peer-to-peer education & direct access to AI influencers

Development of AI Products

Be part of this community to grow your AI work faster!

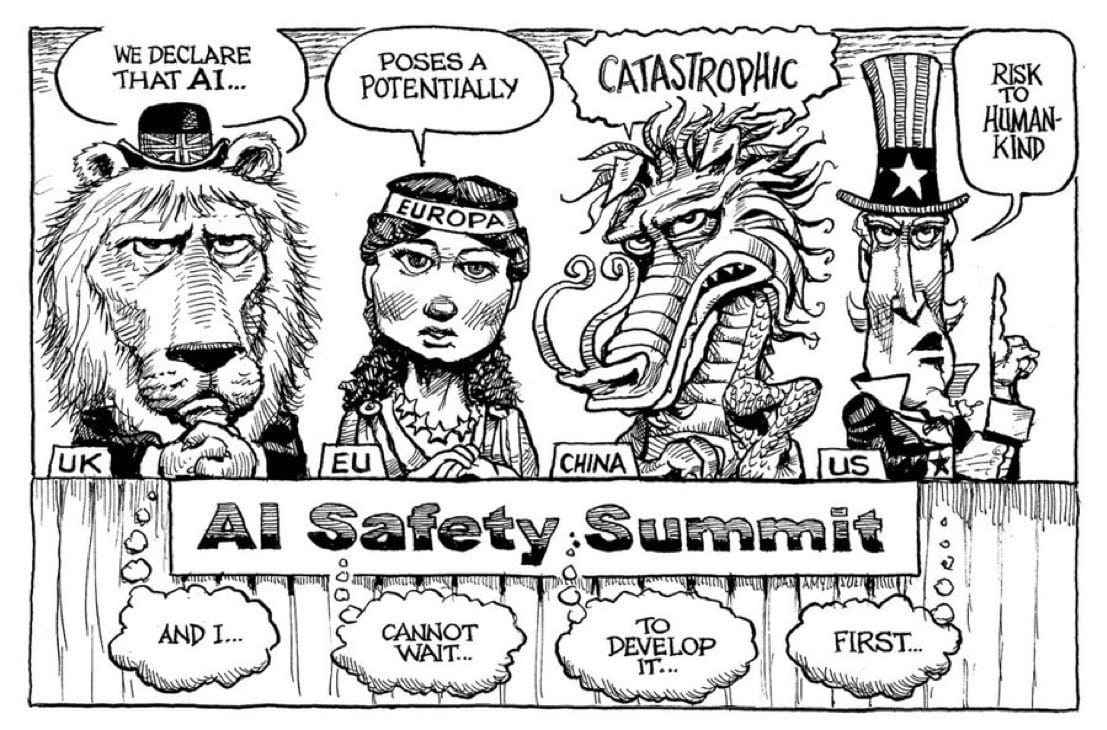

This week the AI Safety Summit at Bletchley Park marked a significant milestone in AI safety with an international declaration to address AI risks, though concerns linger about regulation's balance with innovation. On the other side, Phind introduced a rapid code generation model, challenging GPT-4, and Google just invested $2B in Anthropic. Let’s look into many more interesting news of the week.

This Creators’ AI Edition:

Featured Materials 🎟️

News of the week 🌍

Useful tools ⚒️

Weekly Guides 📕

AI Meme of the Week 🤡

(Bonus) Materials 🎁

Sharing is caring! Refer someone who recently started a learning journey in AI. Make them more productive and earn rewards!

AI News in this edition 🌍

First AI Safety Summit unites global leaders and advances AI safety declaration.

Phind's speedy code model beats GPT-4 with accuracy.

Apple's M3 Macs excel in AI with faster GPUs and neural engines.

Artists' copyright claims against AI art generators are mostly dismissed.

Google invests $2B in Anthropic, solidifying its position in AI.

Featured Material 🎟️

AI Safety Summit at Bletchley Park

The world’s first AI Safety Summit, held at Bletchley Park near London, was a significant event that brought together delegates from 27 governments and heads of top AI companies.

The summit was attended by an array of global leaders, tech executives, academics, and civil society figures, including US Vice-President Kamala Harris, European Commission President Ursula von der Leyen, and Tesla CEO Elon Musk. The conference was hailed as a diplomatic coup by the UK’s Chancellor of the Exchequer, Rishi Sunak after it produced an international declaration to address AI risks.

The US demonstrated its power to set the AI agenda with President Biden issuing an executive order requiring tech firms to submit test results for powerful AI systems to the government before they are released to the public. The UK tech secretary, Michelle Donelan, said she was unfazed by the US initiatives, pointing to the fact the majority of cutting-edge AI companies, such as the ChatGPT developer OpenAI, are based in the US.

Observing the AI Safety Summit at Bletchley Park, I found it to be a significant milestone in the field of AI safety. The gathering of global leaders, tech executives, and academics signaled a collective recognition of the importance of AI safety. The international declaration, backed by over 25 countries and the EU, was a positive step towards addressing the risks posed by AI development.

Read more about the role of AI in today’s Job market 👇

However, the summit also highlighted the challenges that lie ahead. While the US’s move to require tech firms to submit test results for powerful AI systems is commendable, it also raises questions about the balance between regulation and innovation. The fact that the majority of cutting-edge AI companies are based in the US could potentially skew the global AI agenda. Furthermore, while the Bletchley Declaration was a welcome step, some experts expressed concerns that it falls short of the binding arrangements needed to control and shape the fast-evolving field of AI. Overall, the summit was a promising start, but there is still much work to be done.

Here are the 10 key takeaways from the AI Safety Summit

UK convened a summit for the safe development of frontier AI.

Countries agreed to the Bletchley Declaration on AI safety.

Agreement on state-led testing of next-gen AI models.

France to host the next AI safety summit in 2024.

News of the week:

Phind launches a faster code generation model, surpassing GPT-4 in coding tasks with 74.7% accuracy in 10 seconds. This enhanced model supports up to 16k context for in-depth coding questions, offering GPT-4 level responses in less time and more versatility.

The US government's new executive order regulates AI safety, mandating developers to share testing results before release. It focuses on collaboration with the industry, preferring guidelines over strict regulations. The order lacks concrete enforcement, emphasizing "best practices." It demonstrates the US government's commitment to AI safety amid rapid advancements.

Apple's latest Macs, equipped with M3 chips, deliver remarkable AI performance with their enhanced GPU stack and dynamic caching feature. Offering faster neural engines, the M3 Max boasts a 16-core CPU and 40-core GPU with 128GB of unified memory, making it an ideal choice for AI and ML developers.

Check our archive of great tools and AI tutorials for generating images. By getting the App, you can seamlessly access all our previous posts.

Artists' initial copyright claims against AI art generators like Stability AI, Midjourney, and DeviantArt were largely dismissed by a federal judge. Only one direct infringement claim moves forward.

Google is investing $2B in Anthropic, securing a significant stake in the company to stay competitive in the AI market. With Amazon's prior investment, Anthropic has amassed substantial funding, positioning itself among leading AI providers.

Users can now utilize all GPT tools within a single chat. Several individuals are receiving updates for GPT-4, enabling them to employ Vision, ADA (code interpreter), Browsing, and DallE-3 simultaneously. Another update has raised concerns among speculative investors.

Useful Tools of the Week⚒️

ChefGPT - This is a tool that will assist you in your cooking, as it first understands your capacity and then gives you instructions accordingly

It can do tasks like

Add the ingredients you have at home.

Select what meal you want to cook.

Select the kitchen utensils you have.

Select how much time you have.

Try this tool now and let us know in the comments below about the dish you are going to cook with it.

Trickle transforms your screenshots using GPT-4. Beyond summarization, we decode the essence of your captures.

Robin AI - Bringing the power of AI contract copilot to lawyers where they work most productively, in Microsoft Word.

GenVid - Generate SaaS demo videos using AI.

Parse Policy - An AI that reads privacy policies for you.

Creators’ AI could be a valuable gift for your friend, colleague, or family member. Gifting books is bright, but giving an AI newsletter is a superb move 😎

Weekly Guides 📕

Make Artificial Background Sets for your Video with this AI Tool.

How to create a Chrome extension by just writing a prompt.

How to Make AI Animations with AnimateDiff + A1111

AI Meme of the Week 🤡

(Bonus) Material 🏆

How to use IP-adapter controllers for consistent faces

Lightweight tool to identify Data Contamination in LLMs evaluation without accessing the Training Data

Here is how a guy built an AI agent that lives inside my Email inbox, and here are his learnings & code

⚡️ How was this week in AI? Share your content and ideas in the comments to this post so we can discuss or include them in the next edition!